Traditional data warehouses—such as managed SQL Server instances on Azure—have provided a reliable foundation for structured reporting and analytics. However, they are increasingly misaligned with the scale, speed, and complexity of modern data needs.

A modern lakehouse architecture, built on distributed processing engines like Apache Spark and low-cost cloud storage, addresses these challenges directly. This post outlines the differences between the two approaches and offers a high-level migration plan appropriate for a mid-market organization.

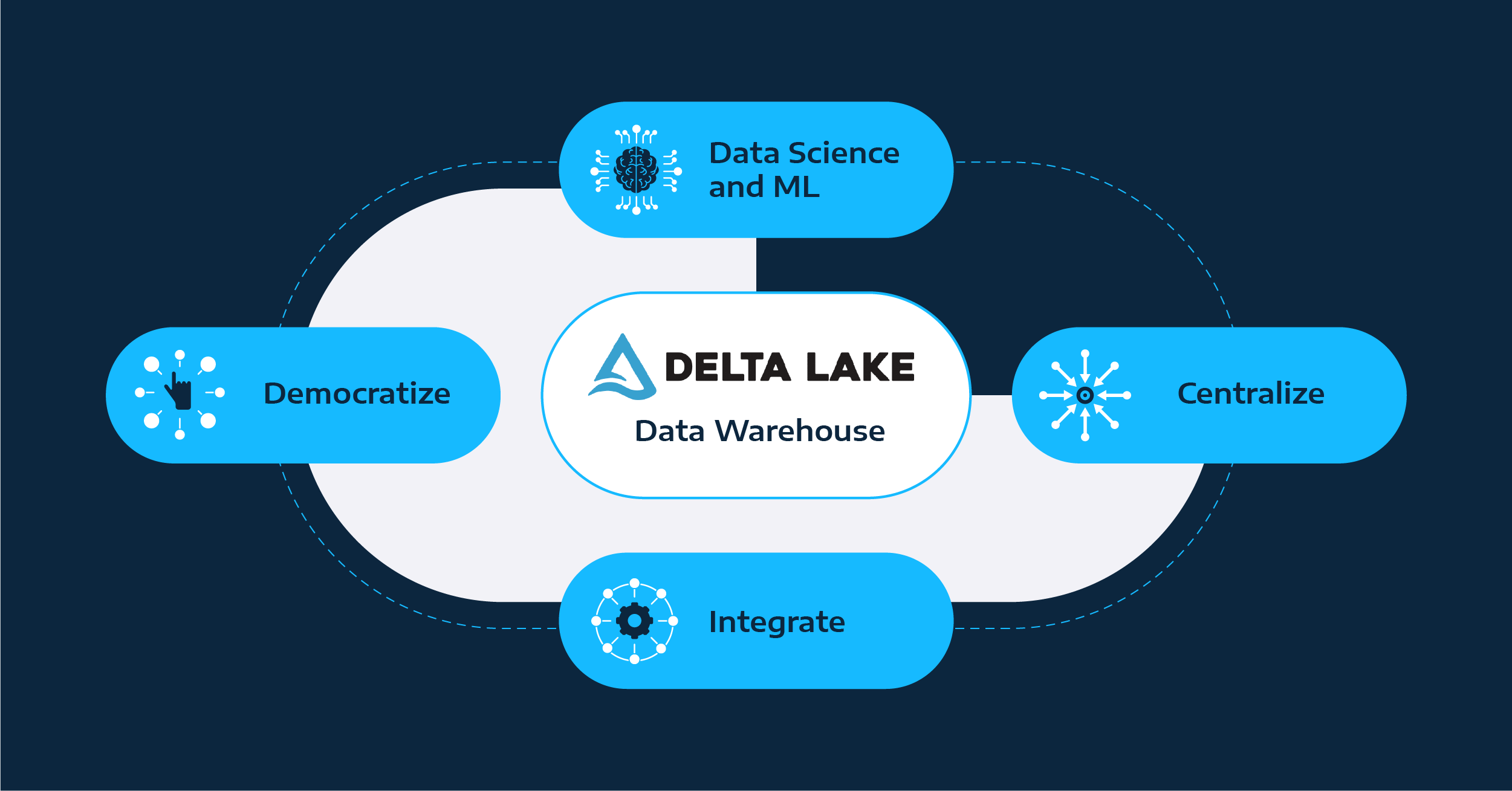

Lakehouse architectures enable faster, more flexible decision-making across the organization.

For Boards and Executives:

Mid-Market Technology-Enabled Company (e.g., $100M ARR SaaS or services business)

Phase

Activities

Estimated Duration

Level of Effort

Discovery

Inventory existing data sources, pipelines, reporting dependencies. Identify high-value datasets for initial migration.

2–4

weeks

Internal data engineering + external advisory (if needed)

Lakehouse Foundation

Provision blob storage. Set up a Spark environment (Databricks, Azure Synapse, or open-source Spark). Establish access controls and governance.

3–6

weeks

1–2 engineers + IT/infosec input

Pilot

Migration

Migrate a key dataset (e.g., product usage, customer telemetry) to the lakehouse. Validate queries, performance, and reporting accuracy.

4–6

weeks

Data engineering + analytics team

Platform Integration

Connect BI tools (e.g., Power BI, Tableau) to the lakehouse. Train analysts on schema-on-read and exploratory workflows.

2–3

weeks

Enablement + training

Gradual Cutover

Migrate additional datasets and deprecate legacy ETL pipelines incrementally. Monitor cost and performance.

2–3

months

Ongoing; may run parallel for some time

Optimization

Apply performance tuning, caching, job scheduling. Evaluate opportunities for AI/ML use cases.

Continuous

Data team + stakeholders

Total Timeframe: ~4 to 6 months for functional parity with legacy systems; ~12 months for full modernization and optimization.

Lakehouse architecture is not a tactical upgrade. It is a structural shift that aligns data infrastructure with modern business requirements: flexibility, scale, and speed.

Organizations that make this transition gain the ability to act on data faster, reduce infrastructure complexity, and support both operational reporting and advanced analytics from a single foundation.